In my earlier articles, “A Minimal Evolving AI Brain for Real Software Development” and “The AI That Dreams in Markdown,” I shared an idea that kept growing in my mind:

what if AI didn’t just answer questions — what if it lived inside a structured memory, learned from it, and slowly became more consistent over time?

That idea became the .mind file system.

At first, it felt almost magical.

Markdown files, carefully written, linked together, forming a living knowledge graph.

An AI could read them, understand context, and continue work without starting from zero every time.

But then reality hit.

Read More

In the previous article,

I explained how a simple folder — a structured .mind directory — can turn an AI assistant into something more stable, more consistent, and far more useful than a standard chat model.

But there is a missing ingredient that makes the AI mind truly powerful.

Not just memory…

but sleep.

Just like humans don’t grow while awake — they grow while resting —

an AI software development agent becomes significantly more capable when it has a structured cycle to organize, compress, and re-link all the knowledge it has learned.

This single idea dramatically enhances the agent’s intelligence and helps build software systems much faster.

Read More

Software development has never been just “writing code.” It’s a constant negotiation between requirements, architecture, tests, documentation, and the long shadow of past decisions. What slows teams down is not a lack of skill — it’s the constant rebuilding of context. Senior developers excel not because they are smarter, but because they remember.

AI can reach that level — but only if we give it continuity.

Modern language models recognize patterns extremely well, yet they forget everything between conversations. Without a memory layer, even the most advanced AI behaves like an intern starting from zero every day.

This is why many teams turn toward heavy solutions like vector databases, embedding pipelines, or multi-agent orchestration. These are useful when your data is chaotic and comes from emails, PDFs, documents, and logs.

But software development is nothing like that.

Our domain is beautifully simple:

- code

- documentation

- architecture

- tests

- decisions

- domain rules

- schemas

Everything is text. Everything is structured. Everything fits together.

Because of this, the best solution isn’t a giant retrieval system —

it’s a clean memory folder + a disciplined prompting method.

Been having fun creating AI-generated music videos lately — and honestly, I enjoy every moment of it.

You can check out more of them on my channel if you’re curious. (/¯◡ ‿ ◡)/¯ ~ ┻━┻

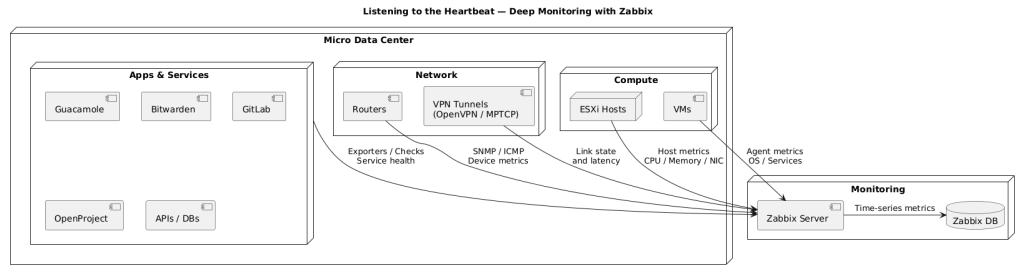

Every system has a heartbeat.

In my Micro Data Center, that heartbeat is digital — pulsing through routers, virtual machines, and power lines that keep everything alive.

Over the years, this small lab has grown into something bigger: a personal research environment where cloud concepts meet on-premise control.

Dozens of systems now run together — OpenVPN tunnels, MPTCP links, UPS units, and ESXi hosts — all working in quiet coordination.

Keeping that entire ecosystem in sync requires one thing above all: awareness.

Listening to the Heartbeat

At first, I relied entirely on Zabbix.

It gave me precision — deep analytics, metrics, triggers, and graphs that told me how every process behaved.

It’s the engineer’s microscope: accurate, reliable, and data-rich.

When most people picture data centers, they imagine vast warehouses filled with racks of servers, industrial cooling systems, and power consumption measured in hundreds of megawatts. Yet there is another category: the Micro Data Center. Defined broadly as an infrastructure that consumes no more than 1–2 megawatts annually, micro data centers are compact, efficient, and tailored for specialized use cases.

For me, a micro data center represents freedom and learning. It’s a research platform, a way to manage aspects of my digital life outside the commercial cloud, and a personal laboratory for exploring cybersecurity. Running such an environment means solving the same kinds of challenges that hyperscale providers face—chief among them, reliable connectivity.

Commercial providers enjoy redundant fiber paths and uplinks measured in tens of gigabits per second. A home lab, by contrast, must rely on residential DSL and wireless connections, stitched together with creativity and careful engineering. That’s where my journey began.

Read More

Introduction

In my home lab, I’ve spent countless hours experimenting with VPN tunneling and multi-WAN aggregation to maximize bandwidth, improve resiliency, and keep traffic secure. On paper, combining OpenVPN with MPTCP (Multipath TCP) should give the best of both worlds: encrypted transport with the ability to harness the sum of multiple internet links.

The reality, however, was very different. When I ran OpenVPN in TCP mode over MPTCP, I ran into the dreaded TCP-over-TCP meltdown. At first, everything seemed fine—throughput scaled nicely, and all WAN links contributed. But after some time, the performance would collapse. VPN sessions would degrade to the speed of a single link, latency would spike, and the benefits of multi-WAN vanished.

This article documents my journey through the problem, why it happens, the dead ends I explored (like UDP-based VPNs), and finally the automation trick that solved the issue.

Read More

1. The Question That Started It All

As a computer engineer, I’ve always been fascinated by the space between abstract theory and tangible experience.

Few theories capture that gap more than string theory (Wikipedia), the ambitious framework suggesting that every particle and force is built from unimaginably small, vibrating strings.

At its heart lies the Polyakov Action (Wikipedia), a mathematical formulation describing how strings move through spacetime. Elegant on paper, it’s intimidating to visualize — and for most people, it stays locked as equations in a textbook.

One day, while studying string theory purely for fun, I asked myself:

“What does this actually look like?”

I decided to stop reading and start seeing.

Read More

Have you ever imagined building your own private cloud from scratch? That’s exactly what I set out to do. So, I rolled up my sleeves, turned my home into a mini data center, and embarked on a mission to create a private, micro-scale cloud that combines computing and storage power. Why? Because it’s not just about the cool factor—it’s about scaling operations, mastering IT and cybersecurity, and, of course, keeping my data safe from the ever-watchful Skynet.

However, I quickly realized that the main threat to this project is connectivity—ensuring stable and sufficient bandwidth is essential for making such a setup practical and reliable. I needed to handle both outbound and inbound connectivity scaling to make this feasible. Intrigued? Let’s discuss the theory of the solution, but first, let’s define the problem.

Read More

OPNSense Removes OpenVPN Advanced Settings: A Security Flaw?

Posted on October 18, 2025 By RoofMan in Pulses

Leave a Comment

So here we go again. OPNSense decided to remove the Advanced section from the new version of the OpenVPN instance. Their excuse? “Security reasons.”

Let’s be honest—this is pure bullshit. That “Advanced” section was exactly where power users like me could fine-tune and push OpenVPN beyond the cookie-cutter defaults. Stripping it out doesn’t magically make anything safer. If anything, it just dumbs down the software and cripples flexibility for people who actually know what they’re doing.

Rate this:

Tags: Advanced Settings, cybersecurity, Firewall Security, IT Infrastructure, Network Administration, Network Management, Open Source Software, openvpn, opnsense, System Hardening, Tech Commentary, VPN Configuration